Artificial intelligence (AI) has come a long way since its inception, revolutionizing various industries and shaping our daily lives in ways we never imagined. In this article, we take a trip down memory lane to explore the fascinating journey of AI, from its early beginnings to the cutting-edge advancements of today. Join us as we delve into the historical perspective of AI, uncovering the key milestones, challenges, and breakthroughs that have shaped this remarkable field. Get ready to be intrigued by the evolution of AI and its profound impact on our world.

The Evolution of Artificial Intelligence

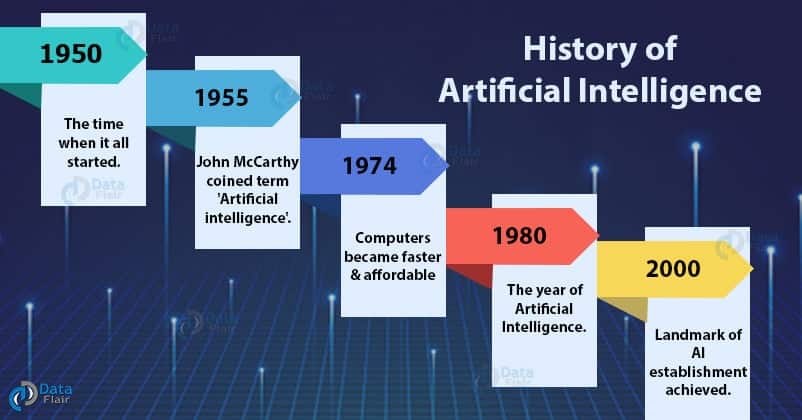

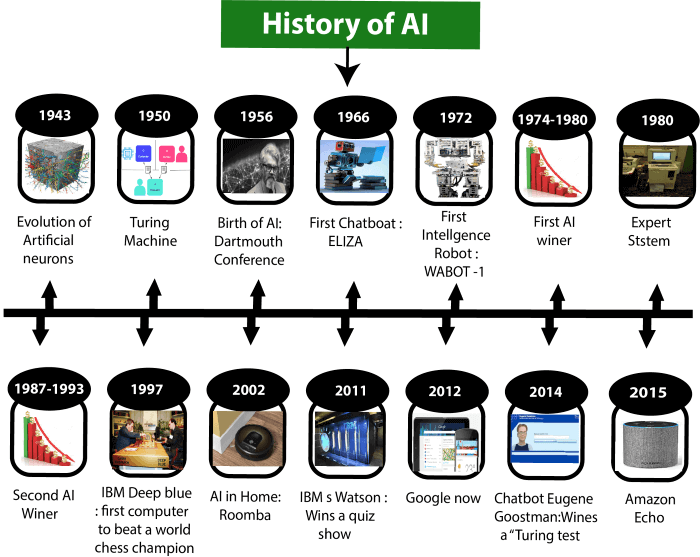

Artificial Intelligence (AI) has come a long way since its early beginnings, with numerous significant milestones shaping its development over the years. From mechanical automata to deep learning algorithms, AI has consistently pushed the boundaries of what machines can do. In this article, we’ll dive into the historical perspective of AI, exploring its origins, major breakthroughs, and the future implications of this transformative technology.

Early Beginnings

The Origins of AI

The roots of AI can be traced back to ancient times when humans first started exploring the concept of creating intelligent beings. The idea of artificial life can be found in mythology and folklore, with stories of animated statues and mechanical creatures. These early imaginings laid the foundation for the development of AI as we know it today.

Mechanical Automata

During the Middle Ages, inventors and craftsmen began constructing mechanical devices, known as automata, that aimed to replicate human movements and actions. These intricate creations fascinated and astounded people with their seemingly human-like behaviors. Although not true AI in the modern sense, these early automata played a significant role in shaping the public’s perception of what machines were capable of.

First AI-Related Concepts

The idea of creating an artificial being capable of intelligent thought surfaced in the late 18th century with the work of inventors and philosophers such as Thomas Hobbes and René Descartes. They proposed that a machine could be built to simulate human reasoning and decision-making. These early concepts laid the groundwork for future advancements in AI.

The Dartmouth Workshop and McCarthyism

The Dartmouth Workshop

The birth of AI as a formal field can be traced back to the Dartmouth Workshop in the summer of 1956. A group of pioneering scientists, including John McCarthy, Marvin Minsky, and Claude Shannon, gathered to explore the possibilities of creating “thinking machines.” The workshop marked a pivotal moment in the history of AI by bringing together like-minded individuals and sparking a surge of interest and research in the field.

The Impact of McCarthyism

However, the progress of AI was not without its challenges. The late 1950s and early 1960s were marked by a period known as McCarthyism, characterized by political scrutiny and fear of communist infiltration. This ideology had a profound impact on scientific research, including AI. Some government agencies, fearing the potential dangers of AI, redirected funding away from the field, hindering its growth.

Cognitive Science and the Birth of AI

Cognitive Science Emerges

In the 1950s, a new field called cognitive science began to emerge, drawing from various disciplines such as psychology, linguistics, and computer science. Cognitive scientists sought to understand how the mind processes information and how intelligent behavior is generated. This interdisciplinary approach provided valuable insights and laid the foundation for AI to become a scientific field of its own.

The Birth of AI as a Field

With the rise of cognitive science, AI became more than just a theoretical concept. Researchers started developing formal frameworks and methods to create intelligent machines. The field began to focus on developing algorithms and programming languages that could mimic human cognition and solve complex problems, marking the birth of AI as a distinct scientific field.

The Turing Test

In 1950, British mathematician and computer scientist Alan Turing proposed what would become an iconic benchmark for AI: the Turing Test. This test aimed to determine if a machine could exhibit intelligent behavior indistinguishable from that of a human. While the Turing Test remains a subject of philosophical debate, it served as a clear goal for AI researchers, inspiring them to create increasingly sophisticated algorithms and models.

Symbolic AI and Expert Systems

Symbolic AI

During the 1960s and 1970s, a dominant approach called symbolic AI gained prominence in the field. Symbolic AI focused on representing knowledge and reasoning using symbols and rules. Researchers developed systems that could manipulate symbolic information and draw logical inferences. Symbolic AI aimed to replicate human reasoning by formalizing knowledge in a logical framework.

Expert Systems

In tandem with symbolic AI, expert systems emerged as a practical application of AI technology. Expert systems aimed to replicate the knowledge and problem-solving abilities of human experts in specific domains. These systems utilized rule-based approaches and knowledge representation techniques to analyze complex problems and provide expert-level recommendations. Expert systems found applications in medical diagnosis, financial planning, and other domains.

Connectionism and Neural Networks

The Rise of Connectionism

In the 1980s, connectionism emerged as a new paradigm in AI, aiming to simulate the behavior of neural networks found in the human brain. Connectionist models emphasized the importance of parallel processing and distributed representations. Unlike symbolic AI, which relied on explicit rules and logical reasoning, connectionism sought to learn from data and extract patterns through interconnected nodes, or “artificial neurons.”

The Perceptron

One of the key milestones in connectionism was the development of the perceptron, a type of artificial neural network, by psychologist Frank Rosenblatt in the late 1950s. The perceptron demonstrated the potential of neural networks for pattern recognition tasks. Although its limitations became evident, it laid the groundwork for future advancements in neural network research.

The Neural Network Renaissance

Following the initial enthusiasm for neural networks, the late 1980s and early 1990s witnessed a decline in interest due to performance limitations and a lack of computational power. This period, known as the “AI Winter,” saw a shift towards other approaches. However, advancements in computing technology and algorithmic breakthroughs in the 2000s triggered a neural network renaissance, enabling the development of deep learning models capable of tackling complex tasks with unprecedented accuracy.

Knowledge-based Systems and Logic Programming

Knowledge-based Systems

During the 1970s and 1980s, knowledge-based systems gained popularity within the AI community. These systems aimed to store and apply extensive knowledge in specific domains. Knowledge-based systems utilized techniques such as rule-based reasoning, semantic networks, and ontologies to represent and reason with domain-specific knowledge. Despite their successes, knowledge-based systems faced challenges in handling uncertainty and scaling to large-scale problems.

Logic Programming

Logic programming, also known as rule-based programming, emerged as a powerful tool for AI in the 1970s. It was heavily influenced by mathematical logic and aimed to solve problems through logical inference and rule-based reasoning. One of the notable logic programming languages is Prolog, which enabled programmers to represent knowledge and create rule-based systems efficiently. Prolog and other logic programming languages opened up new avenues for AI research and application development.

Prolog

Prolog, short for “Programming in Logic,” was developed in the 1970s and became one of the most widely used logic programming languages in AI. Prolog’s declarative nature and built-in inference mechanisms make it well-suited for knowledge representation and automated reasoning tasks. Prolog has found applications in various domains, including natural language processing, expert systems, and robotics.

Machine Learning and Data-Driven AI

The Advent of Machine Learning

Machine learning, a subfield of AI, started gaining momentum in the mid-20th century. It focuses on developing algorithms that enable machines to learn from data and improve their performance over time. The availability of vast amounts of data and advances in computational power paved the way for machine learning to become a dominant approach in AI.

Supervised Learning

Supervised learning is one of the fundamental paradigms of machine learning. In this approach, a machine learning algorithm learns from labeled examples provided by humans. By training on a dataset with known inputs and expected outputs, the algorithm can generalize and make predictions on new, unseen data. Supervised learning has proven highly effective in various applications, including image recognition, natural language processing, and recommendation systems.

Unsupervised Learning

In contrast to supervised learning, unsupervised learning aims to discover patterns and structures within unlabeled data. Unsupervised learning algorithms do not rely on predefined labels or human guidance but instead identify inherent patterns and relationships in the data. Clustering, dimensionality reduction, and generative modeling are common techniques used in unsupervised learning. This approach has found applications in fields such as anomaly detection, market segmentation, and data compression.

Deep Learning

Deep learning has revolutionized the field of AI in recent years, enabling machines to process and understand complex data at unprecedented levels. Deep learning models are composed of multiple layers of interconnected artificial neurons, forming neural networks with a hierarchical structure. These neural networks can automatically learn to extract high-level features and representations from raw input data. Deep learning has achieved remarkable success in areas such as image recognition, natural language processing, and speech recognition.

AI Winter and Revival

The First AI Winter

Following the initial excitement and inflated expectations of AI in the 1980s, the field experienced a downturn known as the “first AI winter.” Funding became scarce, and interest in AI research declined due to unrealistic promises and a lack of practical applications. This winter served as a necessary recalibration, forcing researchers to reassess their approaches and address the limitations of existing AI technologies.

The Second AI Winter

The turn of the century brought renewed interest in AI with the rise of the internet and the explosion of available data. However, the field faced another setback in the early 2000s, leading to the “second AI winter.” This time, hype surrounding AI was fueled by unrealistic expectations and speculative business practices. Investments dried up, and public interest waned.

AI Revival and the Big Tech Boom

In recent years, AI has experienced a remarkable revival, fueled by advancements in computing power, big data, and algorithmic breakthroughs. Major technology companies, known as the “big tech” firms, have invested heavily in AI research, development, and implementation. Breakthroughs in deep learning, robotics, and natural language processing have sparked a new wave of AI applications, ranging from autonomous vehicles to virtual assistants. This technological resurgence has prompted widespread interest and raised hopes for a future enhanced by AI.

The Future of Artificial Intelligence

Advancements in AI Technology

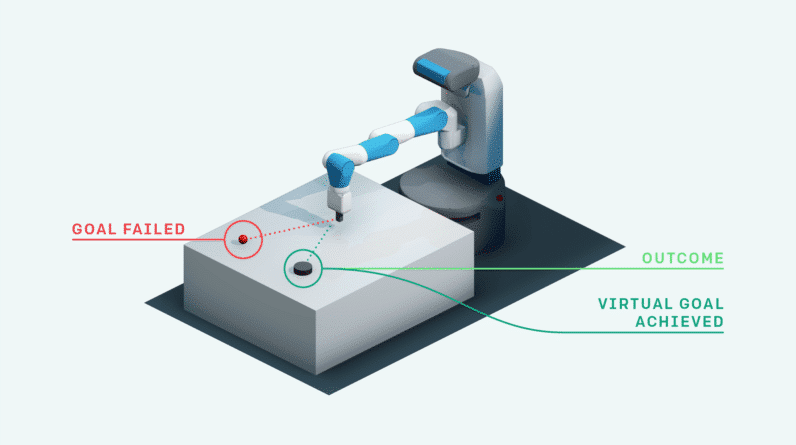

As AI continues to evolve, we can expect to witness increasingly powerful and versatile applications. Advancements in areas such as reinforcement learning, computer vision, and natural language understanding hold the promise of further transforming industries and human lives. AI-powered systems are poised to revolutionize healthcare, transportation, finance, and many other fields, impacting productivity, efficiency, and decision-making processes.

Ethical Considerations

As AI becomes more pervasive, it raises important ethical considerations. Questions about privacy, bias, and the impact on jobs require careful examination and regulation. AI developers and policymakers are grappling with the need to ensure fair and transparent AI systems that respect human rights, values, and diversity. Collaboration between technologists, ethicists, and policymakers is crucial to shape the ethical framework within which AI operates.

AI and Employment

The rise of AI has sparked concerns about the potential impact on employment. While some fear job displacement, others believe AI will create new opportunities and enhance human productivity. As the field progresses, a shift in the nature of work is likely, with tasks that can be automated being increasingly delegated to machines. This will require a focus on reskilling and upskilling the workforce to adapt to the changing landscape and ensure a smooth transition.

AI-Assisted Creativity

One exciting area of AI development is AI-assisted creativity. AI-powered systems have demonstrated remarkable abilities in generating art, music, and writing. From creating realistic paintings to composing original music, AI algorithms hold promise as collaborative tools that can augment human creativity. The intersection of AI and creativity opens up new possibilities and challenges traditional notions of authorship and artistic expression.

In conclusion, the evolution of AI has been a fascinating journey filled with triumphs, setbacks, and remarkable breakthroughs. From ancient myths to cutting-edge deep learning algorithms, AI has come a long way in replicating and augmenting human intelligence. As we embark on the future, it is essential to navigate the ethical implications and harness the transformative potential of AI to create a more inclusive, innovative, and sustainable world.