Artificial Intelligence (AI) is a term that has become increasingly prevalent in our daily lives, yet many still grapple with its fundamental concepts. At its core, AI refers to the simulation of human intelligence processes by machines, particularly computer systems. These processes include learning, reasoning, problem-solving, perception, and language understanding.

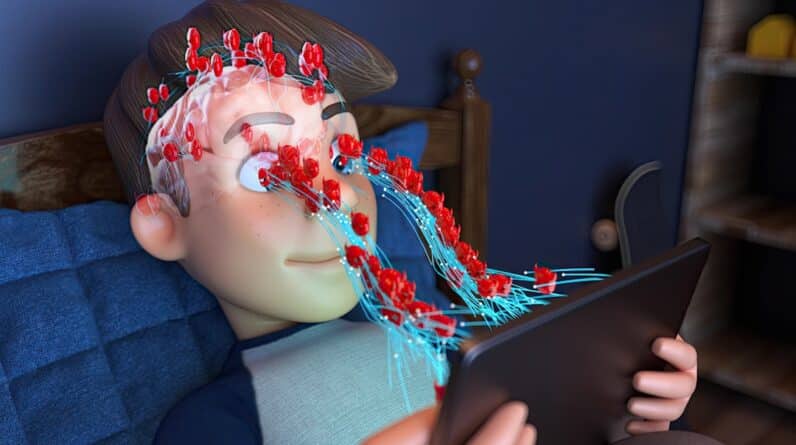

When you think about AI, consider it as a tool designed to perform tasks that typically require human intelligence. This can range from simple functions, like recognizing speech or images, to more complex operations, such as making decisions based on vast amounts of data. To truly grasp the essence of AI, it’s essential to recognize its capabilities and limitations.

While AI can analyze data at speeds and volumes far beyond human capacity, it lacks the emotional intelligence and contextual understanding that humans possess. For instance, an AI can identify patterns in data and make predictions based on those patterns, but it cannot understand the nuances of human emotions or cultural contexts. This distinction is crucial as you navigate the world of AI, helping you appreciate both its potential and its boundaries.

Key Takeaways

- AI is the simulation of human intelligence processes by machines, including learning, reasoning, and self-correction.

- The history of AI dates back to ancient times, but the modern era of AI began in the 1950s with the development of the first AI programs.

- There are three main types of AI: narrow or weak AI, general or strong AI, and artificial superintelligence.

- Machine learning is a subset of AI that allows machines to learn from data and improve their performance over time without being explicitly programmed.

- Ethical considerations in AI include issues of bias, privacy, job displacement, and the potential for misuse of AI technology.

The History and Evolution of AI

The journey of AI began in the mid-20th century, rooted in the desire to create machines that could mimic human thought processes. The term “artificial intelligence” was first coined in 1956 during a conference at Dartmouth College, where pioneers like John McCarthy and Marvin Minsky laid the groundwork for future developments. Early AI research focused on problem-solving and symbolic methods, leading to the creation of programs that could play games like chess or solve mathematical equations.

As you delve into this history, you’ll find that these initial efforts were marked by optimism and significant breakthroughs. However, the evolution of AI has not been linear. The field experienced periods of intense enthusiasm followed by what are known as “AI winters,” times when funding and interest dwindled due to unmet expectations.

It wasn’t until the advent of more powerful computers and the explosion of data in the 21st century that AI began to flourish again. The development of machine learning algorithms and neural networks has propelled AI into new realms, allowing for advancements in natural language processing and computer vision. Understanding this historical context helps you appreciate how far AI has come and the challenges it has faced along the way.

The Different Types of AI

As you explore the landscape of artificial intelligence, it’s important to recognize that not all AI is created equal. Broadly speaking, AI can be categorized into three types: narrow AI, general AI, and superintelligent AI. Narrow AI, also known as weak AI, is designed to perform specific tasks—think of virtual assistants like Siri or Alexa.

These systems excel in their designated functions but lack the ability to operate outside their programmed parameters. On the other hand, general AI represents a more advanced form of intelligence that can understand, learn, and apply knowledge across a wide range of tasks, much like a human being. While this level of AI remains largely theoretical at present, it sparks fascinating discussions about the future of technology and its implications for society.

Finally, superintelligent AI refers to a hypothetical scenario where machines surpass human intelligence across all domains. This concept raises profound questions about control, ethics, and the future coexistence of humans and machines.

The Role of Machine Learning in AI

Machine learning is a subset of AI that has gained significant attention in recent years due to its transformative impact on various industries. At its essence, machine learning involves training algorithms to recognize patterns in data and make predictions or decisions based on those patterns without being explicitly programmed for each task. As you delve deeper into this field, you’ll discover that machine learning is what enables systems to improve over time as they are exposed to more data.

There are several approaches to machine learning, including supervised learning, unsupervised learning, and reinforcement learning. In supervised learning, algorithms are trained on labeled datasets, allowing them to make predictions based on input-output pairs. Unsupervised learning, on the other hand, deals with unlabeled data and focuses on identifying hidden patterns or groupings within the data.

Reinforcement learning involves training models through trial and error, rewarding them for making correct decisions. Understanding these methodologies equips you with the knowledge to appreciate how machine learning drives advancements in AI applications across various sectors.

The Ethical Considerations of AI

As you engage with the world of artificial intelligence, it’s crucial to consider the ethical implications that accompany its development and deployment. The rapid advancement of AI technologies raises significant questions about privacy, bias, accountability, and job displacement. For instance, algorithms trained on biased data can perpetuate existing inequalities, leading to unfair treatment in areas such as hiring practices or law enforcement.

Recognizing these ethical dilemmas is essential for fostering responsible innovation in AI. Moreover, the question of accountability looms large in discussions about AI ethics. When an autonomous system makes a mistake—be it a self-driving car causing an accident or an algorithm making a flawed decision—who is responsible?

As you ponder these issues, it becomes clear that establishing ethical guidelines and regulatory frameworks is vital for ensuring that AI technologies are developed and used in ways that benefit society as a whole.

The Real-World Applications of AI

AI is not just a theoretical concept; it has tangible applications across various industries that are reshaping how we live and work. In healthcare, for example, AI algorithms analyze medical images to assist radiologists in diagnosing conditions more accurately and swiftly. This technology not only enhances patient care but also streamlines workflows within medical facilities.

As you explore these applications further, you’ll find that AI is also revolutionizing sectors like finance through fraud detection systems that analyze transaction patterns in real-time. In addition to healthcare and finance, AI is making waves in transportation with advancements in autonomous vehicles. Companies are investing heavily in developing self-driving cars that promise to reduce accidents caused by human error while improving traffic efficiency.

Furthermore, industries such as retail leverage AI for personalized shopping experiences by analyzing consumer behavior and preferences. These real-world applications illustrate how deeply integrated AI has become in our lives and highlight its potential to drive innovation across various fields.

How to Get Started with AI

If you’re intrigued by the world of artificial intelligence and want to embark on your own journey into this exciting field, there are several steps you can take to get started. First and foremost, building a solid foundation in mathematics and programming is essential. Familiarizing yourself with languages such as Python or R will enable you to work with popular machine learning libraries like TensorFlow or Scikit-learn.

Online courses and tutorials can provide structured learning paths tailored to your interests and skill level. Additionally, engaging with communities focused on AI can be incredibly beneficial. Platforms like GitHub allow you to collaborate on projects with others while gaining practical experience.

Participating in forums or attending meetups can also help you connect with like-minded individuals who share your passion for technology. As you immerse yourself in this vibrant community, you’ll find opportunities for mentorship and collaboration that can accelerate your learning journey.

The Future of AI: Challenges and Opportunities

Looking ahead, the future of artificial intelligence presents both challenges and opportunities that will shape our society in profound ways. On one hand, advancements in AI hold the promise of solving complex global issues such as climate change or healthcare accessibility. By harnessing vast amounts of data and optimizing processes through intelligent algorithms, we can create innovative solutions that improve quality of life for many.

However, these advancements also come with significant challenges that must be addressed proactively. Issues related to job displacement due to automation raise concerns about economic inequality and workforce adaptation. Additionally, as AI systems become more autonomous, ensuring their safety and ethical use becomes paramount.

As you contemplate these future scenarios, it’s clear that collaboration among technologists, policymakers, and ethicists will be essential for navigating the complexities of an increasingly automated world. In conclusion, understanding artificial intelligence requires a multifaceted approach that encompasses its basics, history, types, machine learning role, ethical considerations, real-world applications, pathways for entry into the field, and future implications. By engaging with these topics thoughtfully and critically, you position yourself not only as a consumer of technology but also as an informed participant in shaping its trajectory for generations to come.

If you’re interested in learning more about how AI is being used in retail, check out the article AI in Retail: Personalization and Customer Experience Enhancement. This article delves into how artificial intelligence is revolutionizing the retail industry by providing personalized shopping experiences and enhancing customer satisfaction. It’s a fascinating read that complements the beginner’s guide to unlocking the secrets of AI.

FAQs

What is AI?

AI, or artificial intelligence, refers to the simulation of human intelligence in machines that are programmed to think and act like humans. This includes tasks such as learning, problem-solving, and decision-making.

How does AI work?

AI works by using algorithms and data to analyze patterns, make predictions, and learn from experience. It involves machine learning, where machines are trained to recognize patterns and make decisions based on the data they receive.

What are the different types of AI?

There are three main types of AI: narrow or weak AI, which is designed for a specific task; general or strong AI, which has the ability to perform any intellectual task that a human can do; and artificial superintelligence, which surpasses human intelligence in every way.

What are some examples of AI in everyday life?

AI is used in various applications, such as virtual assistants like Siri and Alexa, recommendation systems on streaming platforms, autonomous vehicles, and fraud detection in banking systems.

What are the ethical considerations of AI?

Ethical considerations of AI include issues related to privacy, bias in algorithms, job displacement, and the potential for AI to be used for malicious purposes. It is important to consider the ethical implications of AI as it becomes more integrated into society.