In this fascinating article, you will explore the captivating world of deep learning and how neural networks mimic the intricate workings of the human brain. Discover the remarkable similarities between these artificial intelligence systems and our own cognitive processes, as well as the groundbreaking advancements in technology that have brought us closer to understanding the mysteries of the mind. Prepare to be amazed as you unravel the secrets behind deep learning and witness the incredible potential it holds for shaping our future.

Understanding Deep Learning

What is Deep Learning?

Deep learning is a subfield of artificial intelligence (AI) that focuses on building and training neural networks to perform tasks without explicit programming. It is inspired by the human brain’s ability to process information and learn from experience. Deep learning algorithms enable machines to learn representations of data by using multiple layers of artificial neurons and complex mathematical models.

Historical Development of Deep Learning

The development of deep learning can be traced back to the 1940s when researchers began exploring artificial neural networks. However, due to limitations in computational power and the lack of sufficient data, progress was slow. It wasn’t until the late 1990s and early 2000s that breakthroughs in the form of new algorithms and the availability of large datasets led to significant advancements in deep learning. Notable milestones include the development of convolutional neural networks (CNNs) for image recognition and the resurgence of interest in neural networks through the emergence of cloud computing and big data.

Benefits of Deep Learning

Deep learning offers numerous benefits that have fueled its rapid growth and adoption in various industries. Firstly, deep learning algorithms can learn from massive amounts of data, enabling machines to make accurate predictions and classifications. Secondly, it has the ability to handle complex, high-dimensional data such as images, videos, and audio, making it ideal for computer vision, natural language processing, and speech recognition tasks. Additionally, deep learning models can continuously learn and improve over time, making them adaptable to changing environments. Overall, the potential applications and benefits of deep learning are vast, revolutionizing fields such as healthcare, finance, and transportation.

Neural Networks and Their Architecture

What are Neural Networks?

Neural networks are computational models inspired by the structure and functioning of the human brain. They consist of interconnected artificial neurons, also known as nodes or units, arranged in multiple layers. Each neuron takes in inputs, processes them through an activation function, and produces an output. These outputs are then passed on to the next layer until a final output is generated. The connections between neurons, called synapses, are represented by weights that determine the strength of the connection.

Components of Neural Networks: Neurons, Synapses, and Weights

Neurons are the basic building blocks of neural networks. They receive signals from other neurons, apply mathematical operations to these signals, and pass the result to subsequent neurons. The connections between neurons, known as synapses, carry information in the form of weights. These weights control the strength of the connection and are adjusted during the training process to optimize the performance of the neural network. By modifying the weights, neural networks can learn to recognize patterns, make predictions, and solve complex problems.

Types of Neural Networks: Feedforward, Convolutional, and Recurrent

There are several types of neural networks, each designed for specific tasks. Feedforward neural networks are the simplest and most common type. Information flows in one direction, from the input layer to the output layer, without any loops or cycles. Convolutional neural networks (CNNs) are primarily used for image classification and processing tasks. They have specialized layers that can detect and extract features from images, making them highly effective for tasks such as object recognition. Recurrent neural networks (RNNs) are used to model sequential data, such as time series or text. They have connections that form loops, allowing information to persist and be influenced by previous inputs, making them suitable for tasks like speech recognition or language translation.

The Brain and Neural Networks

Similarities between Neural Networks and the Human Brain

Neural networks are inspired by the structure and functioning of the human brain, aiming to replicate its ability to process information and learn from experience. Both neural networks and the brain consist of interconnected nodes that process and transmit signals. In both cases, the strength of the connections between nodes is crucial for information processing. Neural networks also mimic the hierarchical organization of the brain, with multiple layers of nodes that learn increasingly abstract representations of the input data. However, it is essential to note that neural networks are still simplified models of the brain and do not fully capture its complexity.

The Neuron: Basic Building Block of Neural Networks and the Brain

The neuron is the fundamental building block of both neural networks and the human brain. In the brain, neurons are specialized cells that receive, process, and transmit electrical signals. Artificial neurons in neural networks, also known as perceptrons, replicate this functionality using mathematical operations. They take inputs, multiply them by corresponding weights, sum them up, and apply an activation function to produce an output. The activation function determines whether the neuron will fire or not, and it can be adjusted to control the behavior of the neural network. By connecting these artificial neurons together, and adjusting the weights, neural networks can learn from data and make accurate predictions or classifications.

Training Neural Networks

Supervised Learning: Training with Labeled Data

Supervised learning is a common training method for neural networks where the model is trained using labeled data. Labeled data consists of input samples paired with their corresponding correct outputs, or labels. During training, the neural network learns to map inputs to outputs by adjusting the weights of its connections. It does this by comparing its predicted output with the true label and updating the weights accordingly. This process is iteratively repeated until the model’s performance reaches a satisfactory level. Supervised learning is widely used in tasks like image classification, speech recognition, and natural language processing.

Unsupervised Learning: Training without Labeled Data

Unlike supervised learning, unsupervised learning does not require labeled data. Instead, the neural network learns to find patterns or structure within the data on its own. The goal is to discover hidden representations or cluster the input data without any prior knowledge. Unsupervised learning algorithms focus on maximizing the similarity between similar data points while minimizing the similarity between dissimilar data points. This approach is valuable in applications such as dimensionality reduction, anomaly detection, and clustering.

Backpropagation Algorithm: Adjusting Weights for Learning

The backpropagation algorithm is a key component in training neural networks. During the forward pass, input data is fed into the network, and outputs are computed layer by layer. The predicted outputs are then compared with the actual labels to calculate an error. The backpropagation algorithm propagates this error backward through the network, adjusting the weights of the connections in a way that minimizes the error. By iteratively optimizing the weights using the gradient descent optimization algorithm, the neural network can learn to make more accurate predictions over time.

Loss Functions: Measuring the Performance of Neural Networks

Loss functions are used to quantify the difference between the predicted outputs of a neural network and the true labels in supervised learning tasks. They provide a measure of how well the model is performing, which is used to update the weights during training. Common loss functions include mean squared error (MSE) for regression tasks and categorical cross-entropy for classification tasks. The choice of the loss function depends on the nature of the problem being solved and the desired behavior of the neural network.

Deep Learning and Feature Extraction

Learning Hierarchical Features

One of the key strengths of deep learning is its ability to learn hierarchical representations of data. Deep neural networks with multiple layers can automatically learn to extract higher-level features from raw data. Each layer specializes in detecting a specific set of features, with higher layers learning more complex and abstract representations. This hierarchical feature extraction allows deep learning models to capture intricate patterns and structures present in the data, leading to improved performance in various tasks such as image recognition, speech processing, and natural language understanding.

Feature Extraction in Computer Vision

Computer vision is one of the areas where deep learning has made significant advancements. Deep neural networks, particularly convolutional neural networks (CNNs), excel at extracting features from images. CNNs use convolutional layers that scan the input image with learnable filters, capturing local patterns and structures. These learned features are then passed through fully connected layers for classification or other tasks. By learning features directly from pixel data, deep learning models can outperform traditional computer vision algorithms in tasks such as object detection, image segmentation, and image generation.

Applications of Feature Extraction in Deep Learning

The ability of deep learning models to automatically extract and learn features from raw data has paved the way for a wide range of applications. In addition to computer vision tasks, feature extraction is also crucial in natural language processing (NLP) and speech recognition. In NLP, deep learning models can extract semantic meanings, syntactic structures, and sentiment analysis from text data, enabling applications like machine translation, text summarization, and chatbots. Similarly, in speech recognition, deep learning models can extract phonetic features, acoustic patterns, and language models to accurately transcribe speech and enable voice-controlled applications.

Understanding Activation Functions

What are Activation Functions?

Activation functions play a crucial role in neural networks as they introduce non-linearity into the model, allowing it to learn complex patterns and make non-linear transformations. Activation functions define the output of a neuron given its inputs. Without activation functions, the neural network would only be able to represent linear relationships between the inputs and outputs. Popular activation functions include sigmoid, ReLU (Rectified Linear Unit), and tanh (Hyperbolic Tangent).

Common Activation Functions: Sigmoid, ReLU, Tanh

The sigmoid activation function, also known as the logistic function, maps the input to a value between 0 and 1. It is often used in the output layer of a neural network for binary classification tasks. ReLU, on the other hand, sets negative inputs to zero and leaves positive inputs unchanged. This non-linear function solves the vanishing gradient problem and accelerates training by allowing for faster convergence. Tanh, similar to sigmoid, maps the input to a range between -1 and 1. It is often used in hidden layers of neural networks and is especially effective when dealing with data that is centered around zero.

Choosing the Right Activation Function for a Neural Network

The choice of activation function depends on the nature of the problem being solved and the behavior desired from the neural network. Sigmoid activation functions are suitable for binary classification tasks, where the output needs to be interpreted as a probability. ReLU activation functions are widely used in deep learning due to their ability to handle vanishing gradients and accelerate training. Tanh activation functions, while similar to sigmoid, are preferable when the input data is centered around zero. Additionally, there are other activation functions available, such as softmax for multi-class classification or Leaky ReLU for preventing dead neurons, and the selection depends on the specific requirements of the model.

Deep Learning Algorithms

Convolutional Neural Networks (CNN)

Convolutional neural networks (CNNs) are a class of deep learning models that have revolutionized computer vision tasks. CNNs are designed for processing data with a known grid-like structure, such as images. They consist of convolutional layers, pooling layers, and fully connected layers. Convolutional layers use learnable filters to detect local patterns and structures in the input data. Pooling layers downsample the output of convolutional layers to reduce the computation and preserve important features. Fully connected layers interpret the learned features and make predictions or classifications. CNNs have achieved remarkable results in tasks such as image classification, object detection, and image generation.

Recurrent Neural Networks (RNN)

Recurrent neural networks (RNNs) are designed to handle sequential data by retaining information from previous inputs. RNNs have an internal memory that allows them to capture dependencies and patterns over time. Each input in the sequence is processed sequentially, and the output at each step is influenced by the previous inputs. RNNs are particularly effective in tasks such as speech recognition, machine translation, and handwriting recognition, where the context of previous inputs is crucial for understanding the current input.

Generative Adversarial Networks (GAN)

Generative adversarial networks (GANs) are a class of deep learning models that consist of two interconnected neural networks: a generator network and a discriminator network. The generator network takes random noise as input and generates synthetic data samples, such as images or text. The discriminator network, on the other hand, tries to distinguish between real and fake data. The generator network aims to generate synthetic data that is indistinguishable from the real data, while the discriminator network aims to become more accurate in distinguishing between real and fake data. This adversarial process results in the generator network improving over time, producing increasingly realistic synthetic data. GANs have found applications in image generation, video generation, and data augmentation.

Challenges and Limitations of Deep Learning

Data Requirements: Need for Large Amounts of Labeled Data

A significant challenge in deep learning is the need for large amounts of labeled data for training. Deep neural networks are data-hungry models that require substantial datasets to learn representative features and generalize well to unseen data. Collecting and labeling such data can be time-consuming, labor-intensive, and expensive. This requirement for vast amounts of labeled data can hinder the applicability of deep learning to domains or industries where labeled data is scarce or hard to obtain.

Computational Power and Training Time

Another challenge in deep learning is the computational power and time required to train large-scale models. Deep neural networks consist of numerous interconnected layers and a large number of parameters. Training these models involves performing millions or even billions of computations, which can be computationally demanding and time-consuming. Advanced hardware accelerators, like Graphics Processing Units (GPUs) and Tensor Processing Units (TPUs), have emerged to speed up the training process, but the need for powerful computing resources remains a challenge for many researchers and practitioners.

Interpretability and Explainability

Deep learning models are often referred to as “black boxes” because understanding how they arrive at their predictions or classifications can be challenging. The complex internal structure of neural networks, with multiple layers and thousands or millions of parameters, makes it difficult to interpret their decision-making process. This lack of interpretability and explainability can limit the adoption of deep learning models in domains where transparency and accountability are crucial, such as healthcare and finance. Addressing these challenges is an active area of research, aiming to make deep learning models more interpretable and explainable.

Applications of Deep Learning

Computer Vision

Deep learning has revolutionized computer vision tasks, enabling machines to achieve remarkable levels of accuracy in image classification, object detection, image segmentation, and image generation. Deep convolutional neural networks, such as the famous ImageNet competition-winning models, have surpassed human performance in recognizing objects in images. Computer vision applications powered by deep learning have found use in various domains, including autonomous vehicles, surveillance systems, medical imaging, and augmented reality.

Natural Language Processing

Natural language processing (NLP) is a field that deals with the interaction between computers and human language. Deep learning has made significant contributions to NLP, enabling machines to understand and generate human language with remarkable accuracy. Deep learning models, such as recurrent neural networks, have been used for tasks like machine translation, sentiment analysis, text summarization, and chatbots. Deep learning approaches have brought advancements in language understanding, language generation, and language representation, transforming how computers process and understand human language.

Speech Recognition

Deep learning has played a crucial role in advancing speech recognition, allowing machines to transcribe and understand spoken language. Deep neural networks, such as recurrent neural networks and convolutional neural networks, have been successful in converting spoken words into written text, powering voice-controlled systems, and virtual assistants. By learning the acoustic patterns and language models from extensive speech datasets, deep learning models have achieved impressive accuracy in speech recognition, enabling applications like transcription services, voice assistants, and automated voice responses.

The Future of Deep Learning

Advancements in Deep Learning Research

Deep learning research is an active and rapidly evolving field, with ongoing advancements in algorithms, architectures, and training techniques. Researchers are working on developing more efficient and accurate models, improving the interpretability and explainability of deep learning, and addressing ethical considerations. Recent developments in unsupervised learning, self-supervised learning, and transfer learning have shown promising results and are expected to drive further advancements in the field.

Emerging Areas of Deep Learning

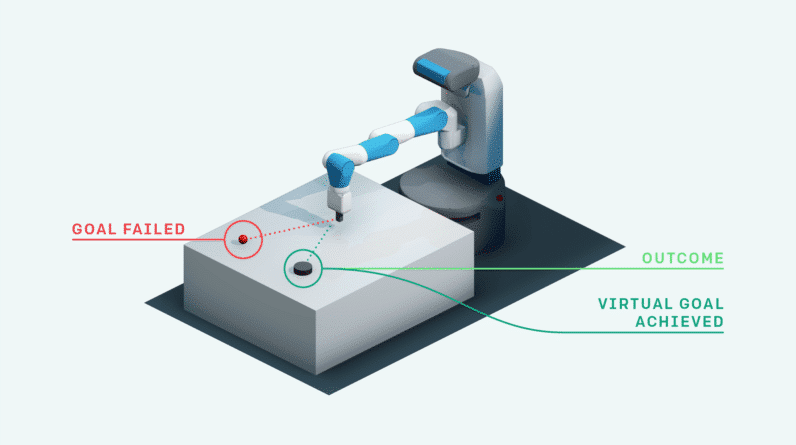

While deep learning has already made a significant impact in computer vision, NLP, and speech recognition, there are several emerging areas where deep learning is expected to have profound implications. These include robotics, reinforcement learning, healthcare, finance, and personalized medicine. Deep learning models have the potential to enhance robot perception and control, enable autonomous systems, optimize financial strategies, predict diseases from medical data, and personalize treatments based on individual patients’ characteristics.

Ethical Considerations and Implications

As deep learning continues to advance and become more integrated into our daily lives, ethical considerations and implications become paramount. Issues such as data privacy, algorithmic bias, job displacement, and the responsible use of AI need to be addressed. Researchers, policymakers, and organizations are actively working on establishing guidelines, regulations, and ethical frameworks to ensure the responsible development and deployment of deep learning technologies.

In conclusion, deep learning is a powerful subfield of artificial intelligence that mimics the human brain’s ability to process information and learn from experience. Neural networks, the primary building blocks of deep learning models, replicate the interconnected structure of the brain’s neurons and synapses. By training neural networks using labeled or unlabeled data, deep learning models can learn to extract meaningful features, make accurate predictions, and solve complex problems in various domains. While deep learning offers numerous benefits and has led to significant advancements in computer vision, NLP, and speech recognition, it also faces challenges such as data requirements, computational resources, and interpretability. Nevertheless, the future of deep learning looks promising, with advancements in research, emerging applications, and a growing emphasis on ethics and responsibility.