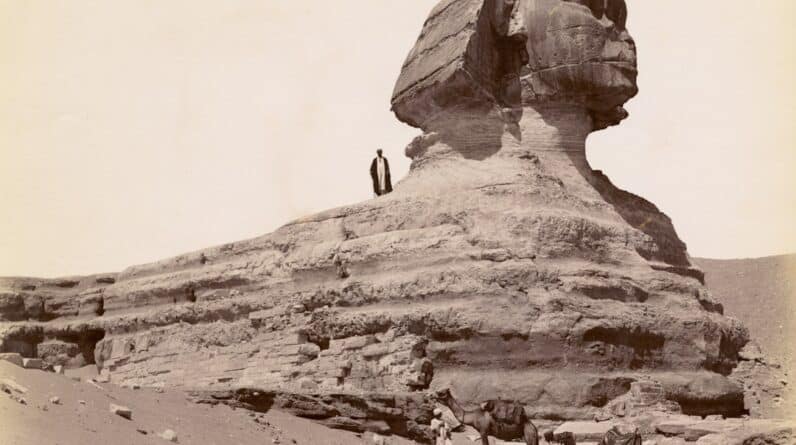

The concept of artificial intelligence (AI) has roots that stretch back to ancient history, where myths and stories hinted at the possibility of creating intelligent beings. You might find it fascinating that the idea of automata—self-operating machines—was present in various cultures, from the ancient Greeks to the Chinese. Philosophers like Aristotle pondered the nature of thought and reasoning, laying the groundwork for what would eventually evolve into modern AI.

The term “artificial intelligence” itself was coined in 1956 during a conference at Dartmouth College, where a group of researchers gathered to explore the potential of machines to simulate human intelligence. As you delve deeper into the origins of AI, you’ll discover that the journey was not merely a technological endeavor but also a philosophical one. Early thinkers grappled with questions about consciousness, reasoning, and the essence of being intelligent.

This intellectual curiosity set the stage for future advancements. The interplay between human cognition and machine capabilities became a central theme, leading to the development of algorithms and computational theories that would eventually give rise to AI as we know it today.

Key Takeaways

- AI originated from the concept of creating machines that can mimic human intelligence and perform tasks that typically require human intelligence.

- Early development of AI can be traced back to the 1950s and 1960s, with the creation of programs that could solve mathematical problems and play games like chess.

- Research and academia play a crucial role in advancing AI through the development of new algorithms, models, and theories.

- Industry and technology have heavily influenced the growth of AI, with companies investing in AI research and development to improve products and services.

- Government and military have also played a significant role in AI development, particularly in areas such as national security, defense, and surveillance.

The Early Development of AI

Pioneering Programs

You may be intrigued to learn about the early programs that demonstrated rudimentary forms of intelligence, such as the Logic Theorist and the General Problem Solver. These programs were designed to mimic human problem-solving skills, showcasing the potential for machines to perform tasks that required logical reasoning.

Overcoming Challenges

As researchers experimented with different approaches, they laid the foundation for future developments in machine learning and natural language processing. However, the early years of AI were not without challenges. You might find it interesting that despite initial enthusiasm, progress was often slow and met with skepticism. The limitations of hardware and computational power hindered advancements, leading to periods known as “AI winters,” where funding and interest dwindled.

A Legacy of Resilience

Yet, these setbacks did not deter dedicated researchers who continued to refine their ideas and explore new methodologies. The resilience of these pioneers ultimately paved the way for significant breakthroughs in the following decades.

The Role of Research and Academia in AI

As you explore the evolution of AI, it becomes clear that research institutions and academic environments have played a pivotal role in its development. Universities became hotbeds for innovation, where scholars collaborated across disciplines to push the boundaries of what machines could achieve. You may appreciate how interdisciplinary approaches—combining insights from computer science, psychology, neuroscience, and linguistics—have enriched the field.

This collaborative spirit fostered an environment where groundbreaking theories and technologies could flourish. Moreover, academic research has been instrumental in establishing ethical frameworks and guidelines for AI development. As you consider the implications of AI on society, you’ll recognize that many universities have taken proactive steps to address ethical concerns surrounding bias, privacy, and accountability.

By engaging students and researchers in discussions about responsible AI practices, academia has contributed significantly to shaping a future where technology aligns with human values.

The Influence of Industry and Technology in AI

The intersection of industry and technology has been a driving force behind the rapid advancements in AI. You might find it compelling how companies have embraced AI to enhance their products and services, leading to innovations that have transformed various sectors. From healthcare to finance, businesses are leveraging machine learning algorithms to analyze vast amounts of data, improve decision-making processes, and create personalized experiences for consumers.

This integration of AI into everyday applications has made technology more accessible and impactful than ever before. However, as you consider the influence of industry on AI development, it’s essential to acknowledge the competitive landscape that drives innovation. Companies are investing heavily in research and development to gain a competitive edge, often leading to breakthroughs that push the boundaries of what is possible.

This race for technological supremacy has resulted in rapid advancements but also raises questions about monopolization and ethical considerations in deploying AI systems. As you reflect on this dynamic, you may ponder how society can balance innovation with responsible practices.

The Impact of Government and Military on AI

Governments around the world have recognized the strategic importance of artificial intelligence, leading to significant investments in research and development. You may be intrigued by how national policies are shaping the future of AI technology. Countries are competing not only for technological supremacy but also for economic advantages that come with being at the forefront of AI innovation.

This competition has prompted governments to establish initiatives aimed at fostering collaboration between academia, industry, and public institutions. The military’s role in AI development is particularly noteworthy. You might find it unsettling yet fascinating how defense agencies have been at the forefront of funding AI research for applications ranging from autonomous drones to advanced surveillance systems.

While these advancements can enhance national security, they also raise ethical dilemmas regarding warfare and civilian safety. As you contemplate these issues, you may wonder how society can navigate the fine line between leveraging technology for protection while ensuring it does not infringe upon fundamental human rights.

The Evolution of AI in Popular Culture

Artificial intelligence has captured the imagination of storytellers and creators throughout history, evolving into a prominent theme in popular culture. You may recall iconic films like “2001: A Space Odyssey” or “Blade Runner,” which explore complex relationships between humans and machines. These narratives often reflect societal anxieties about technology’s impact on our lives, prompting you to consider how fiction can shape public perception of AI.

As you engage with contemporary media, you’ll notice that AI continues to be a prevalent topic in literature, film, and television. From dystopian tales warning against unchecked technological advancement to optimistic portrayals of AI as companions or helpers, these stories resonate with audiences on multiple levels. They invite you to reflect on your own relationship with technology and challenge you to think critically about the implications of creating intelligent machines.

The Future of Artificial Intelligence

Looking ahead, the future of artificial intelligence holds immense promise as well as challenges. You may be excited by the potential for AI to revolutionize industries such as healthcare, education, and transportation. Imagine a world where personalized medicine is driven by data analysis or where autonomous vehicles navigate our roads safely.

These advancements could lead to improved quality of life and increased efficiency across various sectors. However, as you envision this future, it’s crucial to consider the ethical implications that accompany such rapid progress. Questions surrounding job displacement due to automation, data privacy concerns, and algorithmic bias will require careful attention from policymakers and technologists alike.

As you ponder these issues, you may feel a sense of responsibility to advocate for a future where AI is developed thoughtfully and inclusively—ensuring that its benefits are shared equitably across society.

Ethical Considerations in the Development of AI

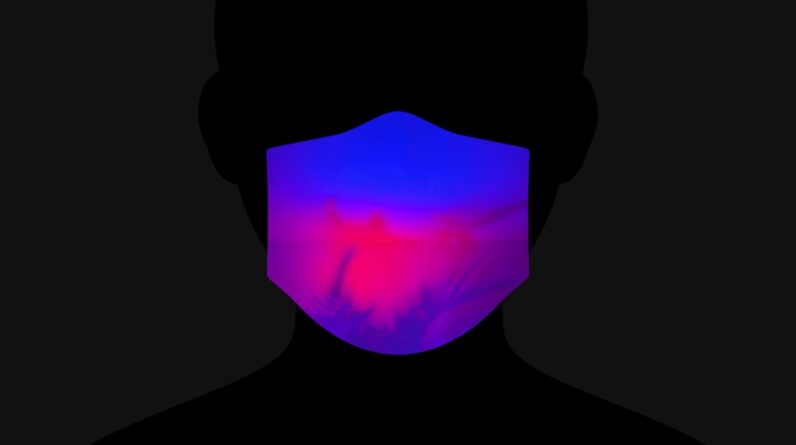

As artificial intelligence continues to evolve, ethical considerations have become paramount in guiding its development. You might find it essential to engage with questions about accountability: Who is responsible when an AI system makes a mistake? As machines become more autonomous, establishing clear lines of responsibility becomes increasingly complex.

This complexity necessitates ongoing dialogue among technologists, ethicists, policymakers, and society at large. Moreover, issues related to bias in AI algorithms demand your attention. You may be surprised to learn that biases present in training data can lead to discriminatory outcomes in decision-making processes.

Addressing these biases requires a concerted effort from developers to ensure fairness and transparency in AI systems. As you reflect on these ethical challenges, you may feel empowered to advocate for responsible practices that prioritize human dignity and social justice in the age of artificial intelligence. In conclusion, your exploration of artificial intelligence reveals a rich tapestry woven from historical origins, academic pursuits, industrial influences, governmental strategies, cultural narratives, future possibilities, and ethical considerations.

As you navigate this complex landscape, you are encouraged to engage critically with these themes and contribute to shaping a future where technology serves humanity’s best interests.

Artificial intelligence has made significant advancements in various industries, including healthcare. According to a recent article on AI in Healthcare: Transforming Medical Diagnosis and Treatment, AI technology is revolutionizing the way medical professionals diagnose and treat patients. By utilizing machine learning algorithms and data analysis, AI systems can provide more accurate and efficient medical solutions. This article highlights the potential of AI in improving healthcare outcomes and patient care.

FAQs

What is artificial intelligence (AI)?

Artificial intelligence (AI) refers to the simulation of human intelligence in machines that are programmed to think and act like humans. This includes tasks such as learning, problem-solving, and decision-making.

Where does artificial intelligence come from?

The concept of artificial intelligence has been around since ancient times, but the modern field of AI was officially founded in 1956 at a conference at Dartmouth College. Since then, AI has evolved through research and development in computer science, cognitive psychology, and other related fields.

What are the main sources of artificial intelligence?

Artificial intelligence is primarily developed through computer programming, machine learning, and deep learning techniques. These methods involve creating algorithms and models that enable machines to learn from data, recognize patterns, and make decisions.

What are the key contributors to the development of artificial intelligence?

Key contributors to the development of artificial intelligence include researchers, scientists, and engineers from various disciplines such as computer science, mathematics, neuroscience, and cognitive psychology. Companies and organizations also play a significant role in advancing AI through funding and resources.

How is artificial intelligence used in the real world?

Artificial intelligence is used in a wide range of applications, including virtual assistants, autonomous vehicles, medical diagnosis, financial trading, and customer service. It is also used in industries such as manufacturing, agriculture, and entertainment to improve efficiency and productivity.